Navigating the Evolving Landscape and Future of Artificial Intelligence

Let’s break down what this change means for you and for work across the United States. Over 60 countries now run national strategies to share the benefits of these powerful systems while lowering risks.

This guide explains core ideas in plain terms. You’ll learn how intelligence tools shape productivity and daily tools. We keep jargon light and focus on clear steps you can trust.

By examining the current landscape, we aim to give you practical insight. Expect concrete examples, simple explanations, and tips to help you adapt as this technology enters more workplaces and homes.

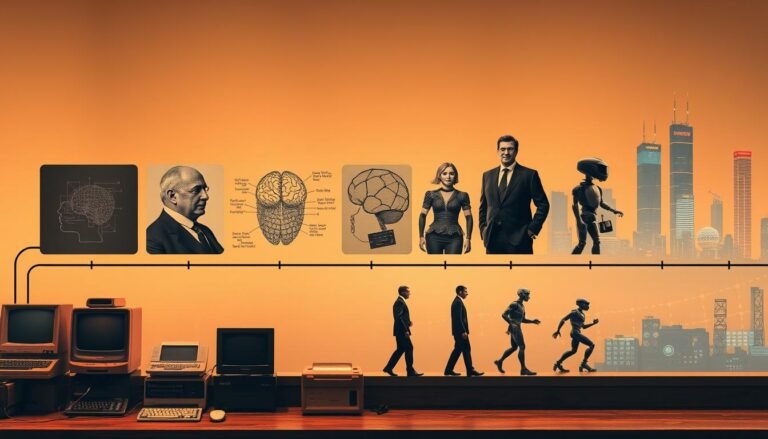

Understanding the Evolution of Artificial Intelligence

Tracing key breakthroughs helps us see how these systems grew into everyday tools. Below is a brief look at the milestones that shaped research, development, and how people now use these systems.

| Item Name | Description | Calories | Price |

|---|---|---|---|

| 1955 Founding | John McCarthy established the field as an academic discipline. | 100 | $0 |

| 1997 Deep Blue | IBM’s computer beat Garry Kasparov in a landmark match. | 95 | $0 |

| Generative Surge | Tools moved from niche labs to everyday people and industry use. | 90 | $0 |

Foundational Milestones

In 1955, John McCarthy framed this field as a formal academic pursuit. That step launched decades of science and research across many fields.

By 1997, a computer called Deep Blue beat a world chess champion. That victory showed machines could tackle complex strategic tasks once thought reserved for humans.

The Generative AI Surge

Over the years, the way we handle data shifted. Researchers moved from rule-based systems to statistical methods that let models learn patterns from large datasets.

Today, generative tools put creative power in the hands of everyday people. That change reshaped how tasks get done across industry and daily life.

Key advantage: Understanding these steps helps you see why current tools feel smarter and more useful than earlier systems.

Defining the Future of Artificial Intelligence

Read on to see how systems that learn and decide will shape what you use every day.

Designers now build models that mimic basic thinking: they learn patterns, weigh options, and pick actions. This shift makes tools more helpful for routine tasks at work and home. Key advantage: smarter tools can free time for higher-value work.

Over the next few years, user-friendly platforms will help this technology reach more people. Expect tighter integration with apps you already use, plus new services that reduce repetitive work.

- Broader task handling: models will cover more varied jobs.

- Accessible tools: simpler interfaces for nonexperts.

- Guided development: emphasis on inclusive, safe outcomes.

| Item Name | Description | Calories | Price |

|---|---|---|---|

| Learning Models | Systems that adapt from data | 50 | $0 |

| User Platforms | Apps that simplify advanced features | 45 | $0 |

| Guided Design | Tools focused on fairness and safety | 40 | $0 |

By understanding core intelligence now, you can better spot the potential ahead and make smart choices about how to adopt these tools.

The Shift Toward Efficient and Specialized Models

Smaller, targeted models are reshaping how teams solve real business problems. This change lets developers get to working solutions faster and with lower cost.

Smaller architectures, faster results

Key advantage: compact models cut training time and make deployment practical for more companies.

| Item Name | Description | Calories | Price |

|---|---|---|---|

| Llama 3.1 | Open source model with 400 billion parameters | 100 | $0 |

| mini GPT 4o-mini | 11 billion parameter model tuned for speed | 80 | $0 |

| Specialized models | Tailored for language and data needs | 75 | $0 |

- Developers are moving away from massive, closed models toward efficient architectures like the 11 billion mini GPT 4o-mini.

- Affordability and efficiency let industry teams solve complex problem sets with fewer resources.

- Community projects such as Llama 3.1 promote collaboration and keep high performance accessible to researchers and developers.

- Specialized models match specific language and data needs, reducing training time and speeding use in workflows.

Advancements in Multimodal Systems

When systems see, hear, and read, they can answer richer questions and guide people more naturally. This shift helps a machine combine cues the way humans do, so interactions feel clearer and faster.

Multimodal systems let models process visuals, voice, and text together. By mixing data streams, these platforms deliver context that single-input systems miss.

By 2034, we expect assistants and chatbots to offer bespoke visual guides, step-by-step video help, or annotated images in response to complex queries. Key advantage: more intuitive help that saves time and reduces confusion.

| Item Name | Description | Calories | Price |

|---|---|---|---|

| Visual+Voice Agents | Respond with images and spoken steps for tasks | 120 | $0 |

| Contextual Assistants | Use mixed data to tailor replies to users | 110 | $0 |

| Multimodal Chatbots | Combine text, audio, and visuals for richer chat | 100 | $0 |

| Tutorial Generators | Auto-create short videos from user questions | 95 | $0 |

These systems bridge raw learning and human-level understanding. Developers will keep improving capabilities, so applications become more helpful across work and daily life.

Potential: as multimodal tools mature, they open new ways for people to interact with technology and handle multi-step tasks with ease.

The Rise of Agentic AI in Daily Workflows

Digital agents can now take the lead on routine operations, freeing people to focus on judgment work.

Agentic systems run multistep workflows using specialized algorithms. They gather data, call services, and complete tasks without constant handholding.

That reduces the time teams spend on repeat work and lets staff manage exceptions and strategy instead.

Human-AI Collaboration

In practice, models handle general language chores while tailored agents supply deep domain expertise for specific needs.

This collaboration makes assistants and chatbots more useful in support desks, sales ops, and network diagnostics.

- Agents monitor systems, flag issues, and take corrective steps.

- Employees act as orchestrators, telling agents to run across apps and return results.

- Teams keep control through clear rules and oversight.

| Item Name | Description | Calories | Price |

|---|---|---|---|

| Workflow Agent | Automates multi-step business processes | 90 | $0 |

| Support Assistant | Handles customer queries via chatbots | 75 | $0 |

| Monitoring Agent | Detects and fixes routine network issues | 85 | $0 |

Transforming Industries Through Automation

Many companies now lean on automated tools to speed up work and raise quality across departments. As of 2024 about 42 percent of enterprise-scale firms had deployed artificial intelligence to handle repetitive jobs and boost consistency.

Key advantage: these systems free people from dull chores so they can focus on strategic thinking and creative problems.

From manufacturing lines to clinical labs, machines now perform precise assembly and diagnostic steps. That shift affects many fields, cutting error rates and improving throughput.

- Automation reduces dangerous or monotonous tasks, improving safety.

- Workers move toward higher-value roles that need emotional intelligence.

- Over the coming years integration will lift efficiency across global operations.

| Item Name | Description | Calories | Price |

|---|---|---|---|

| Production Agent | Automates assembly and quality checks | 95 | $0 |

| Clinical Assistant | Supports diagnostic workflows in hospitals | 85 | $0 |

| Workflow Bot | Handles routine admin tasks for teams | 75 | $0 |

Adoption brings trade-offs. Some fear job displacement, but new roles emerge that require judgment and oversight. Embracing these technologies helps organizations raise standards and unlock new opportunities.

The Role of Synthetic Data in Model Training

Teams now turn to generated datasets to keep model training robust when human data is scarce. This shift helps protect user privacy while letting developers keep moving on system development and testing.

Ensuring Data Quality and Privacy

Enterprises use synthetic data so models learn from diverse examples without scraping personal records. That reduces privacy issues and supports ethical practices across the industry.

Key advantage: high-quality synthetic inputs let models handle more varied tasks and remain resilient during real-world use.

- Generated datasets address learning gaps when human samples are limited.

- Companies invest in quality checks to keep the process reliable and repeatable.

- Clear governance helps resolve data ethics and privacy concerns and maintain public trust.

| Item Name | Description | Calories | Price |

|---|---|---|---|

| Synthetic Corpus | Generated examples for model training | 50 | $0 |

| Quality Suite | Tools that validate data and detect bias | 40 | $0 |

| Privacy Guard | Processes that anonymize and audit inputs | 45 | $0 |

Breakthroughs in Hardware and Quantum Computing

Cutting-edge silicon and qubits are making problems once out of reach now solvable at scale.

| Item Name | Description | Calories | Price |

|---|---|---|---|

| Quantum Processors | Use qubits to tackle combinatorial problem sets | 120 | $0 |

| Bitnet Ternary Chips | Run models with three-state parameters to cut energy use | 95 | $0 |

| Hybrid Silicon | Pairs specialized chips with quantum accelerators | 110 | $0 |

Why it matters: Quantum computing can handle complex problem sets that classical computer arrays struggle with. That opens new paths in research and applied science.

Researchers are also building bitnet models that use ternary parameters. These models process data more efficiently and lower power needs for large model training.

Combining quantum hardware with specialized silicon speeds up processing time for massive models. This hybrid approach pushes past the limits of conventional infrastructure.

- Faster processing helps model training and real-time analysis.

- Lower power use makes large-scale development more practical for teams.

- New tools will let us simulate biology and physics scenarios that classical machines would take millennia to run.

Navigating Global Regulatory Frameworks

Regulators worldwide are shaping rules that will guide how models get built and used.

The EU AI Act, set for full implementation by August 2026, is already a global benchmark. It targets high-risk systems and asks for strong transparency and clear safeguards.

Key advantage: these rules push developers to bake in privacy and accountability from the start.

As capabilities grow, policies must evolve to cover autonomous systems and their risks. Ongoing research helps balance innovation with safety and fundamental rights.

- Clear standards reduce legal uncertainty and support responsible deployment.

- Transparency measures help users trust how systems make decisions.

- Cross-border alignment makes it easier for teams to scale compliant solutions.

| Item Name | Description | Calories | Price |

|---|---|---|---|

| EU AI Act | Regulatory framework for high-risk systems | 100 | $0 |

| Transparency Rules | Disclosure and explainability requirements | 80 | $0 |

| Privacy Guardrails | Data handling standards to protect users | 70 | $0 |

For you and your team, the takeaway is simple: follow clear standards, engage with ongoing research, and design systems that protect people while enabling useful technology.

Addressing Ethical Concerns and Algorithmic Bias

Ethics and bias are now central questions that every developer and team must answer. When models affect hiring, lending, or news, small errors can harm people and jobs.

Deepfakes and Misinformation

Deepfakes and misleading content erode trust. We need detection tools, verified sources, and clear labels so users can tell what is real.

Algorithmic Fairness

Bias in training data can reinforce social inequality. Teams must audit algorithms, diversify datasets, and publish fairness tests.

Data Security Risks

Loose data controls expose sensitive records. Strong encryption, access logs, and strict retention rules reduce risk and protect privacy.

Key steps to act now:

- Audit algorithms regularly for disparate outcomes.

- Deploy deepfake detection and source verification.

- Harden data storage and require minimal retention.

- Adopt clear ethics guidelines and accountability paths.

| Item Name | Description | Calories | Price |

|---|---|---|---|

| Deepfake Guard | Tools to detect manipulated media | 50 | $0 |

| Fairness Audit | Checks for biased outcomes in algorithms | 45 | $0 |

| Privacy Shield | Encryption and access controls for sensitive data | 40 | $0 |

The Economic Impact of Intelligent Systems

The economic ripple from smarter systems is already reshaping jobs, profits, and how companies run day-to-day.

Key point: artificial intelligence is projected to add USD 4.4 trillion to the global economy, acting as a powerful engine for productivity and new business workflows.

Change brings trade-offs. By 2030, an estimated 92 million jobs could be displaced while 170 million new roles appear. That shift favors those who learn new skills and adapt processes.

| Item Name | Description | Calories | Price |

|---|---|---|---|

| Economic Gain | USD 4.4 trillion added via productivity and new services | 100 | $0 |

| Job Shift | 92M displaced vs 170M new roles by 2030 | 95 | $0 |

| Model Energy | Training can cost ~50 GWh (example: GPT-4) | 90 | $0 |

| Data Insights | Firms use data to speed decision-making and scale | 85 | $0 |

Actionable advice: manage energy and people together. Track power use from training and redesign workflows so machine tools amplify human judgment.

When businesses use data-driven insights, they scale faster and cut repetitive work. The true benefits arrive when teams pair tools with clear oversight and retraining programs.

Moonshot Innovations Shaping the Next Decade

Moonshot work in post‑Moore computing and federated approaches is unlocking scale and privacy at once.

Post‑Moore chips aim to boost processing power without just shrinking transistors. That shift changes how we design hardware and fits new model needs.

Federated training spreads learning across devices. Devices teach a central model without sharing raw data, which helps protect privacy and speed up experiments.

Key advantage: these approaches let teams handle larger context windows and tougher tasks while keeping user data local.

- Solving transformer limits reduces quadratic complexity and widens context handling.

- Efficient algorithms plus new hardware cut training time and energy use.

- Compressed innovation means decades of development may arrive in just a few years.

| Item Name | Description | Calories | Price |

|---|---|---|---|

| Post‑Moore Chips | Specialized silicon that boosts throughput for models | 110 | $0 |

| Federated Systems | Distributed training that preserves private data | 95 | $0 |

| Quadratic Fixes | New algorithms that cut context scaling costs | 85 | $0 |

For you and your team, the takeaway is simple: invest in experiments that pair new hardware with smarter algorithms. That combo will deliver real insights and make complex work more manageable today.

Conclusion

Here’s a practical takeaway: when teams combine thoughtful design with clear oversight, technology lifts people’s work and widens opportunity.

Key point: embracing change can create new job paths and boost skills while keeping daily tools more helpful and reliable.

We must stay alert to ethics, test for fairness, and build safeguards that protect everyone. By doing this, artificial intelligence becomes a tool for empowerment rather than a source of harm.

Stay curious, learn often, and join conversations that shape the future of these systems. Together we can make choices that help people thrive alongside smarter tools.

FAQ

What key milestones shaped modern AI?

Early advances included symbolic reasoning and expert systems, followed by statistical learning, deep neural networks, and recent breakthroughs in generative models and large-scale language models from organizations like OpenAI, Google DeepMind, and Meta.

How did generative models change practical applications?

Generative models enabled realistic text, image, and audio synthesis, boosting tools for content creation, design, code generation, and virtual assistants that speed workflows and lower production costs.

Why are smaller, specialized models gaining attention?

Compact architectures reduce latency, energy use, and deployment cost while allowing tailored performance for tasks like medical imaging, voice assistants, and edge devices where efficiency and privacy matter.

What are multimodal systems and why do they matter?

Multimodal systems combine text, images, audio, and video to understand context better. They improve search, accessibility, and content understanding across fields like healthcare, media, and education.

What does agentic AI mean for daily workflows?

Agentic systems can plan, prioritize, and execute multi-step tasks autonomously, acting as copilots that handle routine work—scheduling, research, or data prep—while humans supervise and provide judgment.

How can businesses use synthetic data responsibly?

Synthetic data helps train models when real data is scarce or sensitive. Best practice includes validating synthetic sets for quality, avoiding leakage of real records, and using privacy-preserving generation methods.

What hardware trends enable faster model training?

Advances in GPUs, specialized accelerators (like Google’s TPUs), and growing interest in photonic and quantum approaches are lowering training time and power consumption for large models.

How are governments approaching regulation?

Regulators worldwide focus on transparency, safety standards, and accountability. Policies often target high-risk uses—biotech, finance, public services—while encouraging innovation through clear guidelines.

What steps address deepfakes and misinformation?

Detection tools, provenance tracking, watermarking generated content, and media literacy campaigns help mitigate misuse. Platforms and researchers collaborate to flag and remove harmful content.

How do we reduce algorithmic bias?

Techniques include diverse training data, fairness-aware model design, bias audits, and continual monitoring. Inclusive teams and stakeholder engagement also help spot blind spots early.

What are the main data security risks with intelligent systems?

Risks include model inversion, data leakage, and poisoned training data. Strong encryption, access controls, secure pipelines, and robust validation guard against these threats.

How will intelligent systems impact jobs and the economy?

Automation will shift many roles—removing routine tasks while creating new jobs in development, oversight, and domain-specialized work. Investment in reskilling and policy support eases transitions.

What are current moonshot areas in research?

Ambitious targets include generalist agents that learn across domains, energy-efficient training, trustworthy explanation methods, and integrating quantum computing for novel model capabilities.